|

|

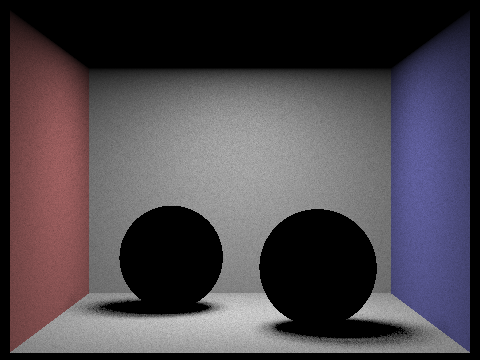

For ray generation, I compute a random point inside the pixel using the sampler get_sample() funtion and cast a ray from the camera into world space.

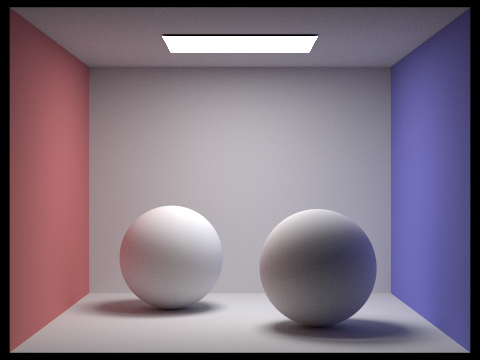

As for the primitive intersections, I calculated the intersection points of the ray with the surface of the sphere or plane of the triangle, made sure the intersection point is in bounds, and set/return fields appropriately.

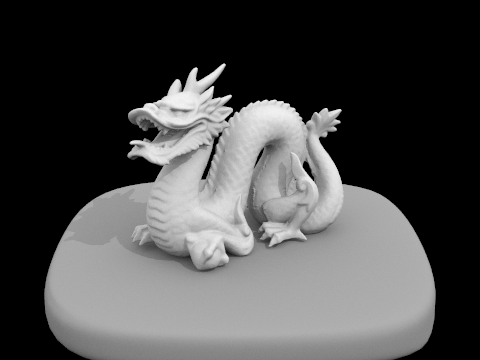

The triangle intersection algorithm I implemented is the Moller Trumbore algorithm. The Moller Trumbore algorithm takes advantage of barycentric coordinates of a triangle as well as the parameterization of a ray to solve out vector equation using matrix and vector manipulations.

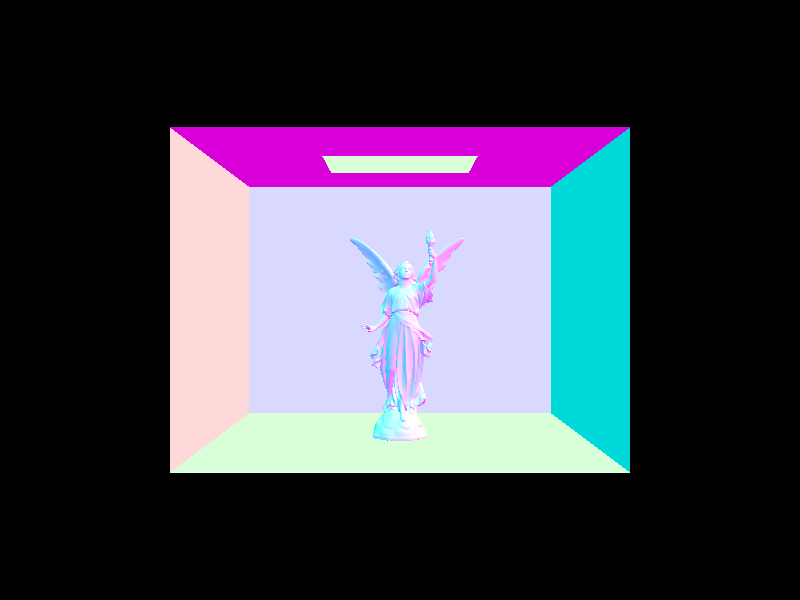

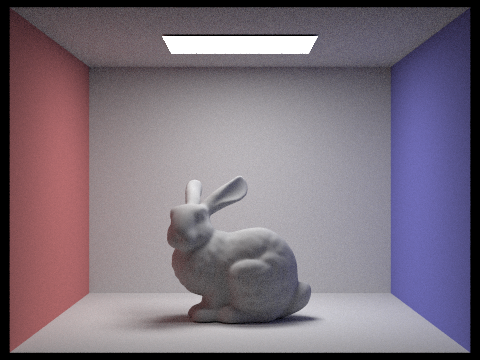

|

|

For my BVH construction algorithm, I check to see if the current node is a leaf node. If not, I split the bounding box in half based on the box's largest dimension. Then I sort the primitives on either side of the split threshold and recurse on each side to obtain a set of leaf nodes.

For my BVH intersection algorithm, I first check to see if the ray intersects the group bounding box. If it does, and the node is a leaf, I iterate through the primitives in the node to check for intersections, letting the intersect functions I wrote for Part 1 handle the assignments for me. If the node is not a leaf, then I recurse into the child nodes.

|

|

Without the acceleration structure, the cow took 1178 seconds to render, casting 473033 rays and averaging 5335 intersection tests per ray. The teapot took 423 seconds to render, casting 473211 rays and averaging 2190 intersection tests per ray. After implementing the BVH acceleration structure, the cow took 1.99 seconds to render, casting 478498 rays and averaging 3.26 intersection tests per ray. The teapot took 1.86 seconds to render, casting 476777 rays and averaging 2.73 intersection tests per ray.

Instead of checking for intersection with every primitive object in the scene, the BVH structure separates the objects into a tree stucture, meaning we run, on average, in O(log(n)) time to search for an intersection leaf bounding box and max_leaf_size intersection tests with primitives in that leaf node. This is significantly better than running n intersection tests per ray.

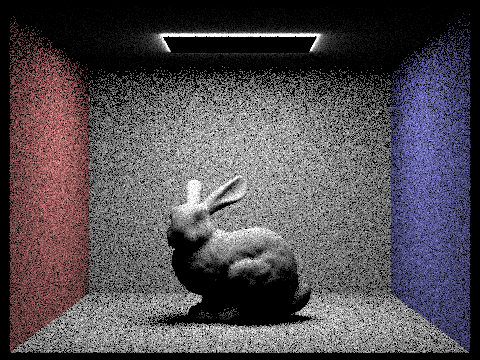

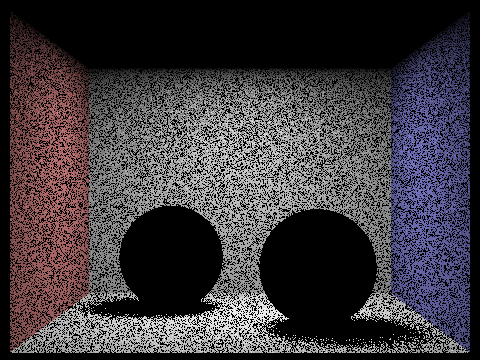

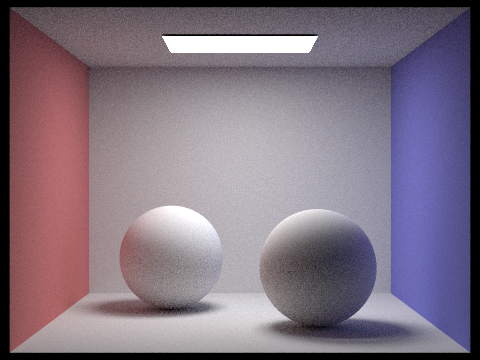

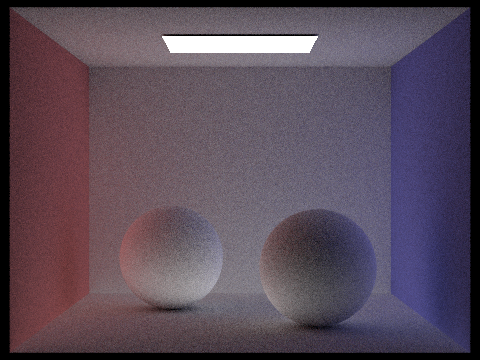

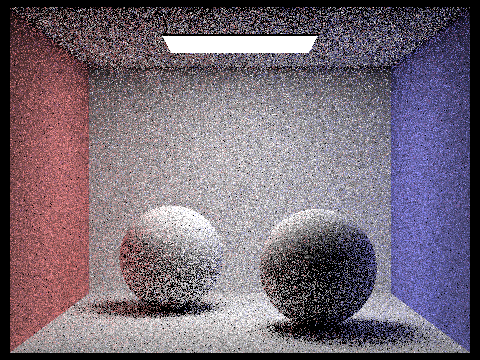

For the hemisphere direct lighting function, I took num_sample samples on a uniform hemisphere and casted rays in each direction. Then I check if the rays hit anything, and if they do, convert the incoming radiance from the intersection point into outgoing irradiance by multiplying by the BSDF and the cosine term from Lambert's cosine law, while averaging the total irradiance.

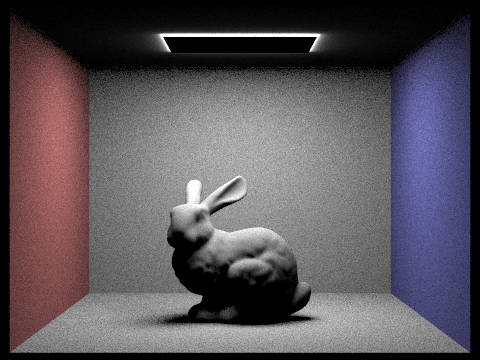

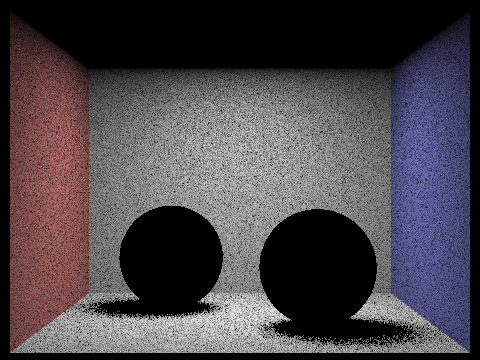

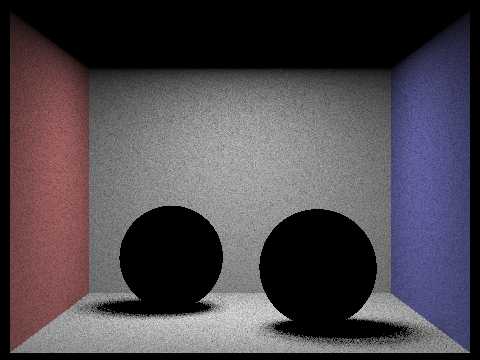

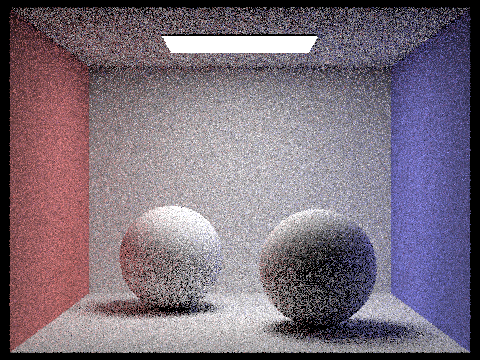

For the importance direct lighting function, I looped through each scene light, and sampled rays from the hit point to the scene light. Then I make sure that the light is on the correct side of the intersection point and compute the shadow ray by checking for objects between the hit point and the light. Finally, I sum up all appropriate irradiance values according to the Monte Carlo integration.

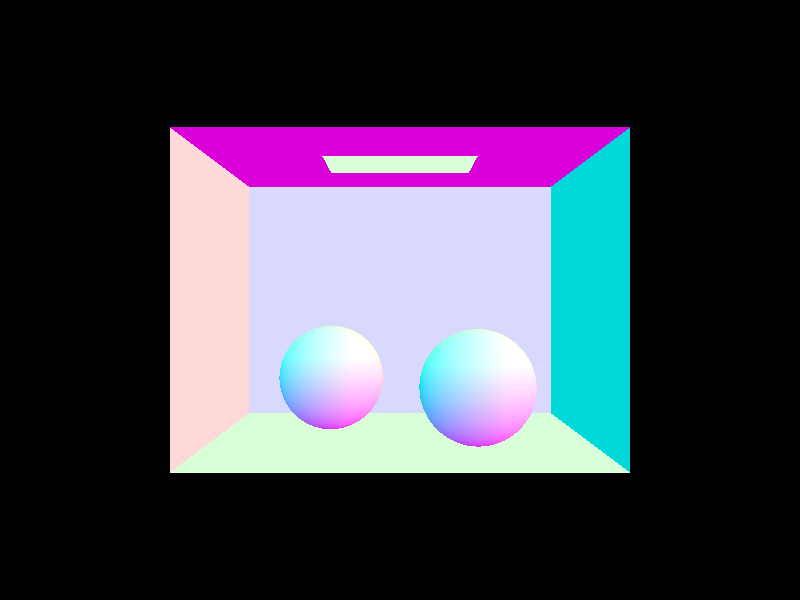

|

|

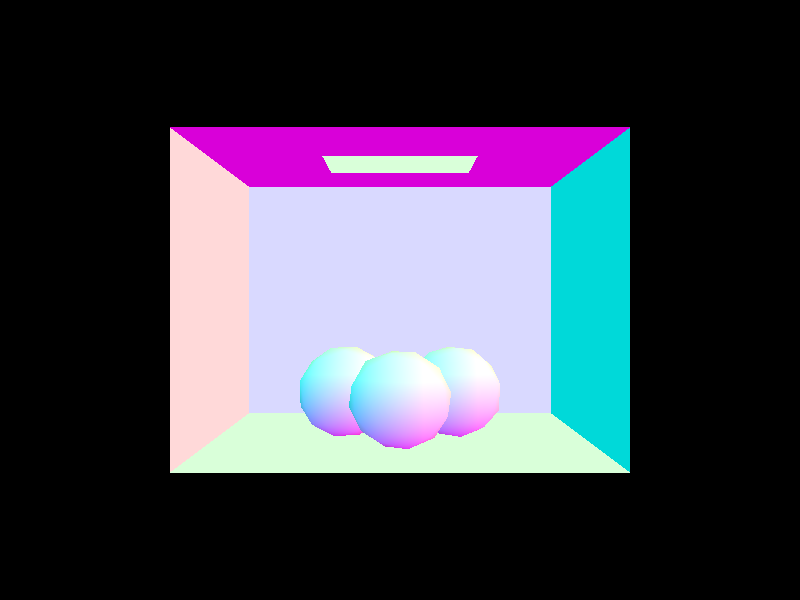

|

|

As you increase the number of light rays, the noise the shadows significantly decreases, and the overall picture is cleaner.

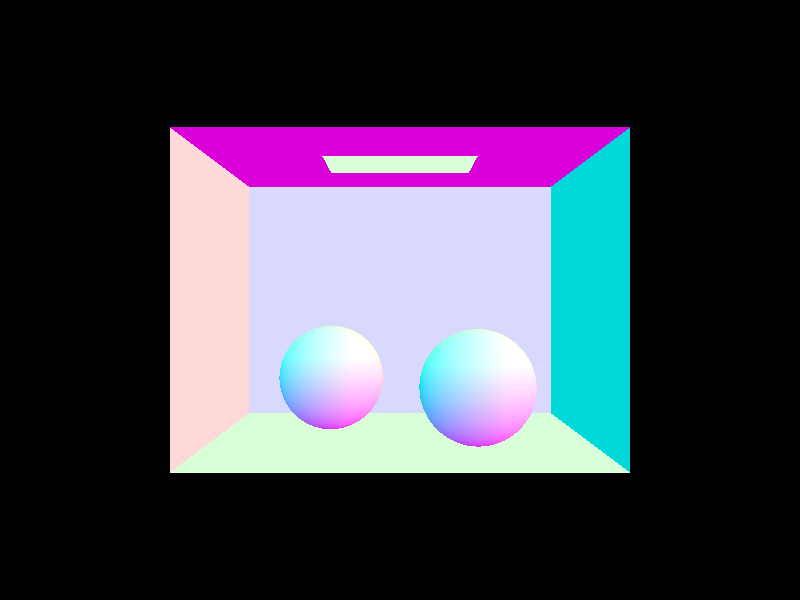

|

|

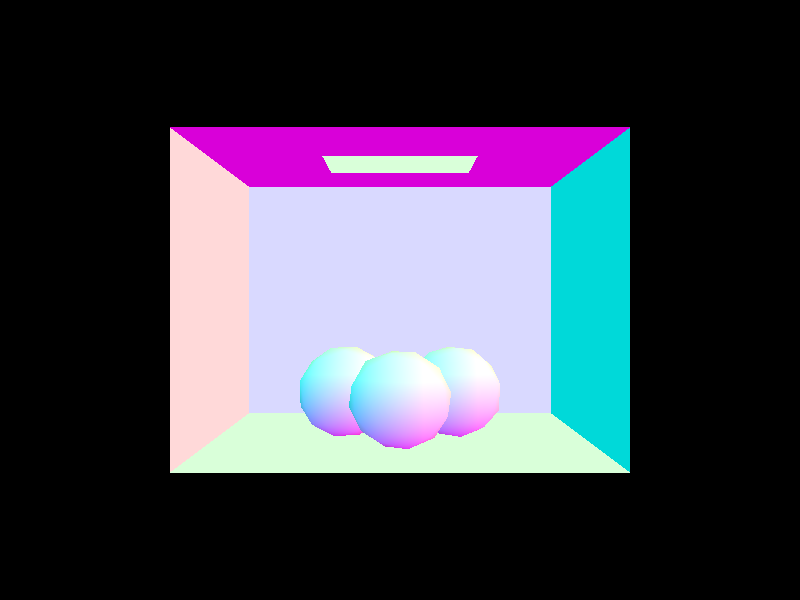

|

|

Between uniform hemisphere sampling and lighting sampling, lighting sampling produces a clearer picture with significantly less noise per pixel. This is because with lighting sampling, we guarantee that the sampled ray comes from a light source and not a random point in the scene, meaning each ray that contributes to the lighting/shadows is more significant than a random ray along a hemisphere.

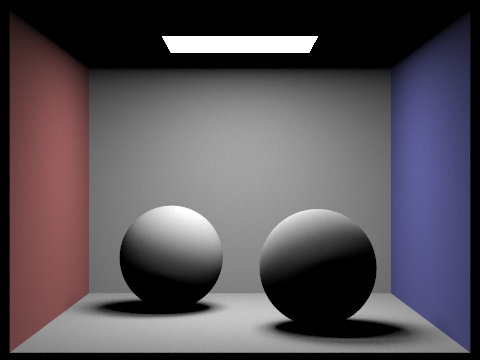

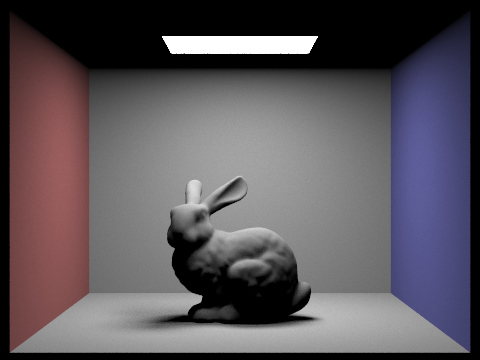

For the zero bounce case, the irradiance is simply the emission of the bsdf. The one bounce case is direct illumination. For at least one bounce, I first sample the bsdf and check termination conditions - recursion depth and Russian roulette with probability 0.3. If the recursion does not terminate, I cast a ray from the bsdf sample, check for intersections, and recurse on the intersection point if the ray hits something. Upon termination, return the direct illumination value of the current recursion level.

|

|

|

|

Changing ray recursive depth:

|

|

|

|

|

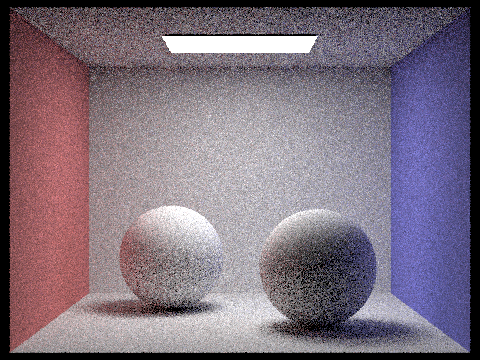

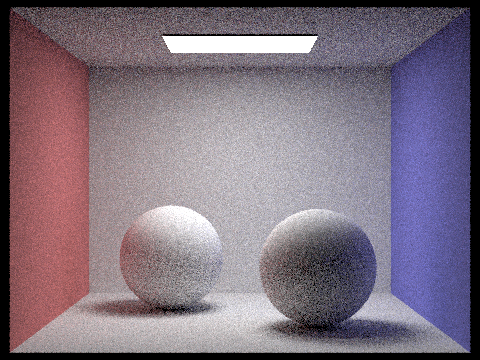

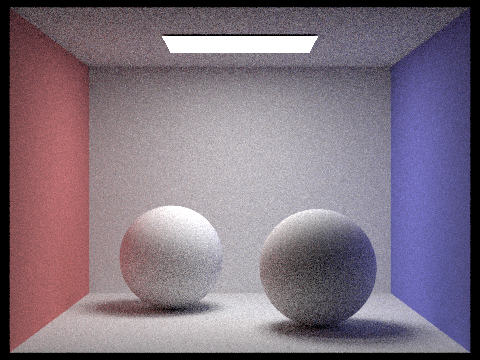

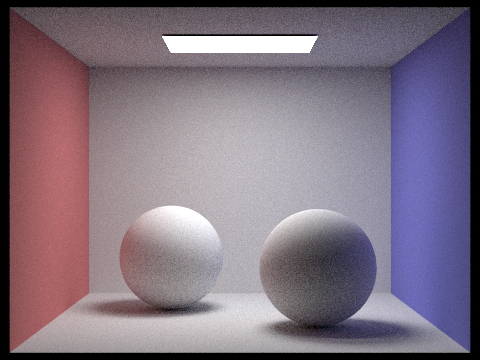

Changing sample rate:

|

|

|

|

|

|

|

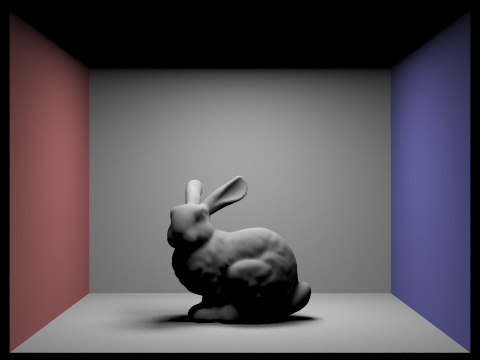

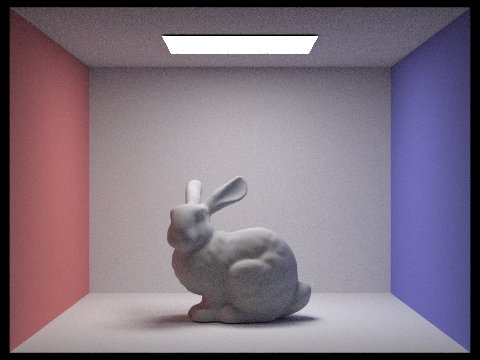

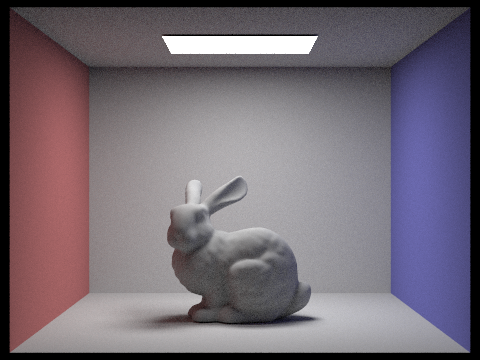

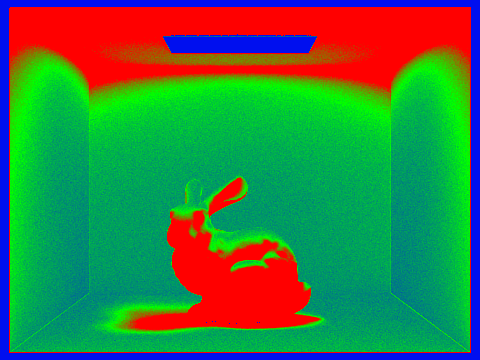

For adaptive sampling, I simply added a segment for computing the statistics in the raytrace pixel loop. When the estimated radiance returns, I get the sample's illuminance and keep a running sum and running squared sum. After each batch of samples, I compute the mean, variance, and convergence condition. If the approximate convergence is achieved within num_samples, we update the sampleCountBuffer and return the appropriate Spectrum average.

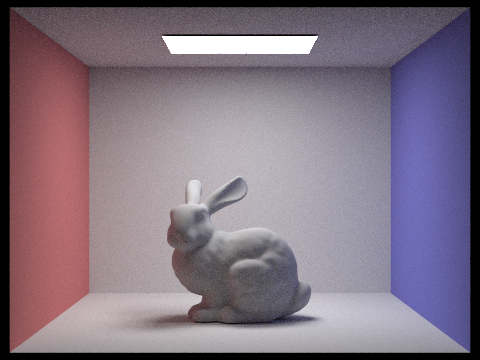

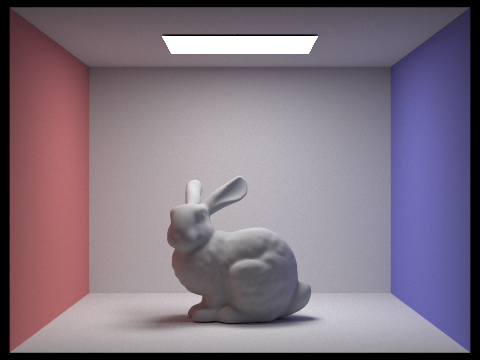

Bunny with 2048 samples, 64 samples per batch with 0.05 tolerance, 1 light ray, and 5 depth.

|

|